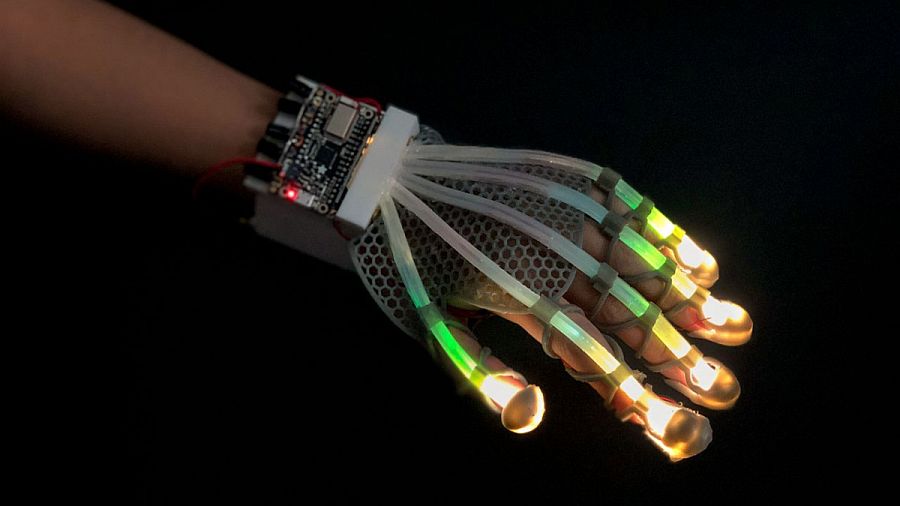

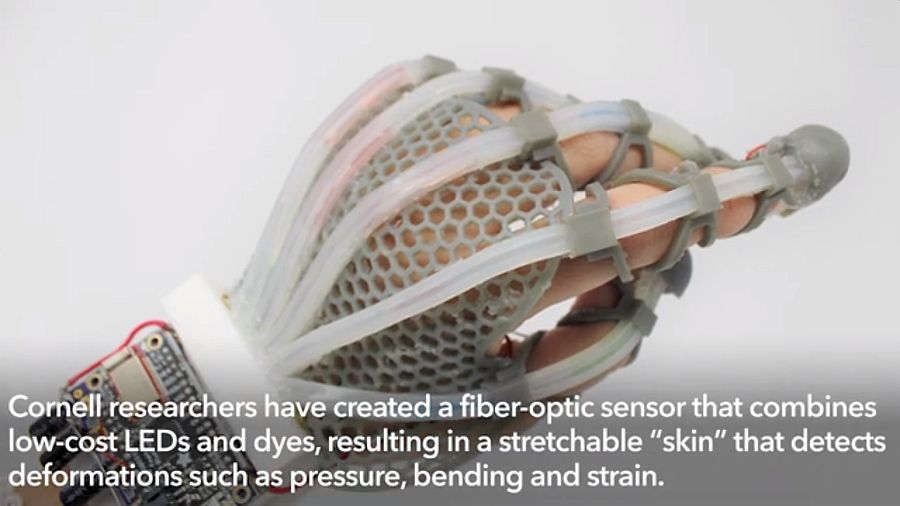

Sensors that could stretch will pave way towards new intelligent soft systems. Working on the same line of thought Cornell researchers have combined fiber-optic sensor with no so expensive LEDs (light-emitting diode) and dyes. The outcome is a form of a stretchable “skin” that is able to spot topographical distortions like pressure, bending and strain.

Resultant sensor is capable of delivering tactile sensation to soft robotic systems. With this technology, it is also expected that people experiencing augmented reality might get a chance to feel the same rich physical sensations of objects in the real time that they otherwise feel in natural world.

However, the study’s lead researcher, Rob Shepherd envision the tech to be a part of physical therapy and sports medicine.

Optoelectronic prosthesis

The idea is not new, as a matter of fact, this tech is an iteration of Shepherd’s earlier stretchable sensor that was developed in 2016. With the help of optical waveguides, light was first made to pass through and then the variation of the intensity of the beam is measured by photodiode.

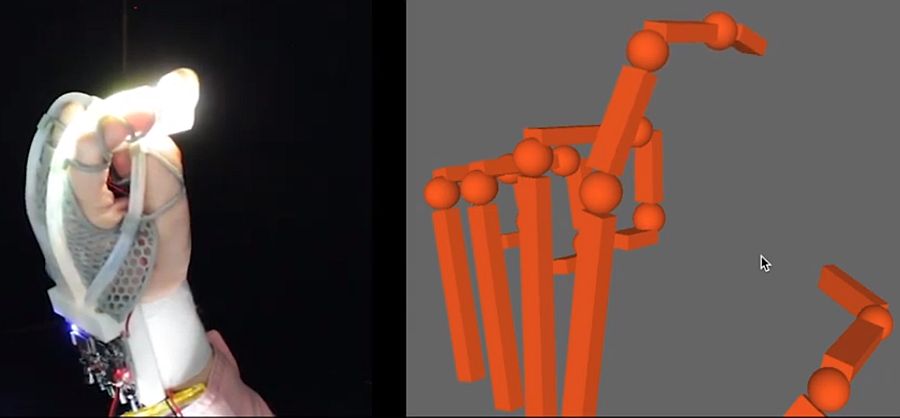

The optoelectronic prosthesis was used to determine both the grasping and determining the texture as well as shape of an object.

Stretchable lightguide for multimodal sensing (SLIMS)

For the current project, researchers used a stretchable lightguide but with a variation. The aim was to develop stretchable lightguide for multimodal sensing (SLIMS).

Fingers on the hands were replaced with long tubes that each has an embedded individual polyurethane elastomeric core.

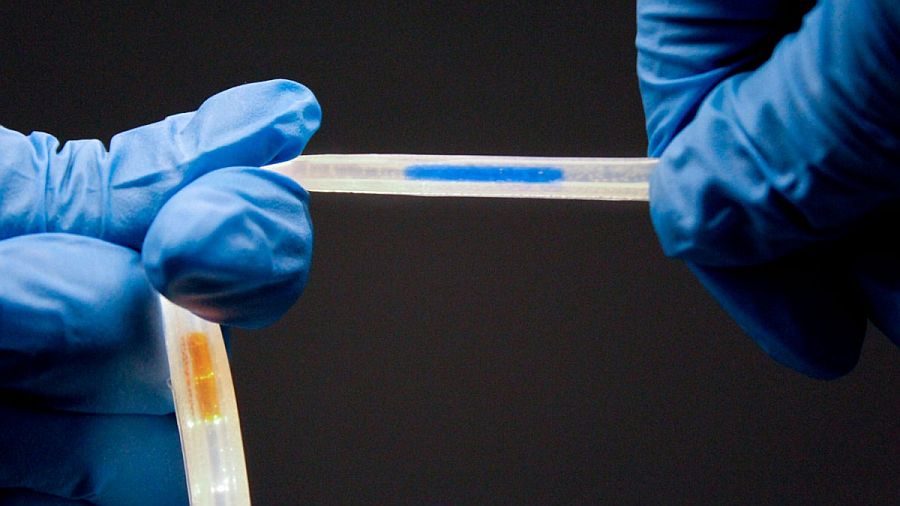

Keeping one core transparent, the subsequent one is filled with absorbing dye and finally connecting each tube to an LED.

Each core is coupled with red-green-blue sensor chip so that it can register every nano geometric alteration that will happen in the optical path of light.

With each core detecting the range of deformations, it will increase the number of outputs. Hence, encoding all types of topographical changes like pressure, bending or elongation.

To make it more interesting, researchers paired up the tech with mathematical model that enabled real time decoupling of the various distortions. And like a human hand, indicating their exact locations and magnitudes.

SLIMS has low resolution and can be operated by small optoelectronics. Which is quite opposite to silica based fiber-optic sensors that requires high-resolution detection equipment.

Takeaway

With this tech, machines will be able to measure tactile interactions just like cameras in smartphones uses its vision tech to measure touch, claimed the researchers.

It looks promising and one of the most scalable way to revolutionize the soft robotics, prosthetics and sports along with VR and AR immersion.

Via: Cornell University